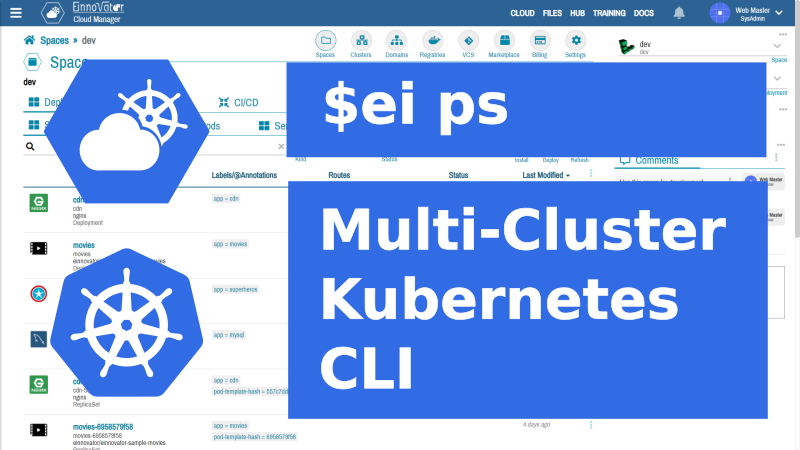

A CLI Tool for Multi-Cluster Kubernetes

Cloud Manager Rocks ArticleA CLI Tool for Multi-Cluster Kubernetes: Cloud Manager Rocks

Command-line tools (CLI) have a mystic of their own. While a fancy web UI tends to be the preferred experience for most end-users these days, the appeal of CLI tools to many die-hard developers and devops is well known. Most importantly, CLI tools enable scripting and automation in places were an interactive UI can not be used or stands in the way (e.g. auto-setup scripts, script generation by other tools, gitops, and ad-hoc devops by typing). With proper built-in help, usage can be rather nice. And playing around with the command set makes learning the concepts&operations the tool+backend supports easy. All this to say, that we released the CLI tool for Cloud Manager – our state-of-the art multi-cluster/multi-cloud Kubernetes PaaS web tool. If you plan to sharp up your skills and team capabilities on Kubernetes, cloud computing, and/or micro-services development in 2021, this is something we believe you should take a serious look.

The CLI tool support essentially all/most of the operations supported by Cloud Manager UI. The command set and usage model takes inspiration from several other CLI tools, including kubectl, docker, and cf — CloudFoudry CLI. Like these other tools, our CLI tool — named ei (short for EInnovator CLI) — assumes a server is running in the background (Cloud Manager backend and UI). Most operations are implemented by sending a request to the server.

In the following, I give some background on Kubernetes Cloud Manager and show you how to get started using the CLI tool in your operations and projects. The presentation is mostly example driven, as this is the way to best get familiar and master a CLI tool. The CLI tool provides commands for easy navigation between the command line and the UI. So you don’t need to be radical about your preferred usage model. You can sometimes and for some tasks use the CLI tool, while for other tasks and use-cases you might prefer the web UI and its well-trimmed dashboards. The tool can run as a traditional command-line, it can be used to run script files, or it can be run in interactive mode with command auto-completion.

Cloud Manager Quick Recap

Cloud Manager is built from the ground-up to support multiple Kubernetes clusters side-by-side — possibly from multiple cloud-providers and/or on-premises. It provides a flat (virtual) Space model, mapped to Kubernetes namespaces in different clusters. This allows to switch between clusters, with the same easy as between namespaces in a single cluster. For example: the CLI command ls lists all spaces across all clusters (unless a filter option is specified); the command cd cluster/space defines a space in a cluster to be the current or default one for devops (option -n can override this); the command ps lists deployments in current space (ps -a also lists jobs and cronjobs).

Cloud Manager maintains its own DB to keep some management data, including: cluster access credentials, deployment data, and solution catalogs. This DB is independent of the etcd DB used internally by each Kubernetes cluster. This approach offers flexibility to Cloud Manager as it allows for a simple configuration model. In particular, allows operations and configuration to dispense of Kubernetes intricate, boiler-plate, and error-prone YAML manifest files in most cases. Cloud Manager and its DB does not affect in any way the cluster operation, so there is zero vendor lock-in when using Cloud Manager.

A Deployment can be started and configured in a single run command, as in docker and kubectl. Alternatively, the deployment can be create first in Cloud Manager DB without being deployed to the underlying cluster. One or more commands can be used to further configure the deployment (e.g. DNS routes, environment variables, and volume mounts). And then when fully-configured, the deployment is started with command start (or equivalent button in the UI). Likewise, the deployment details can be updated in Cloud Manager DB freely in multiple steps after it is running, and the changes will only take effect when the deployment is restarted with command restart (or equivalent UI button).

In this regard, the approach of Cloud Manager UI and CLI resembles and takes more inspiration from Cloud Foundry configuration and operations model, than the Kubernetes model that is heavily dependent on YAML manifest files. On the other hand, Cloud Manager preserves all of Kubernetes power and flexibility, including the equals treatment of stateless and statefull deployments (which is lacking in Cloud Foundry).

Cloud Manager UI and CLI provides a common view to stateless and stateful workloads — corresponding to Deployments and StatefulSets kinds in the Kubernetes model. We believe this is the most convenient way to think, manage, and operate on deployments. Although stateless deployments are mostly used for applications, and statefull deployments for backend services, there is some overlap in use-cases — specially, in micro-service architectures. Jobs and CronJobs are also supported as “first-class citizens” by both the UI and the CLI. On the other hand, ReplicaSets and Pods, are considered more of as an “implementation detail” of Kubernetes. And so the Cloud Manager UI and CLI command-set reflects this distinction.

Cloud Manager has built-in support for reusable solutions organized in catalogs or standalone. While there are other tools for this in the Kubernetes ecosystem, in Cloud Manager the approach is more integrated with the overall devops experience. Multiple packaging formats are supported, and the format of the catalog index file is compatible with Helm. Commands market and install provide a convenient way to explore and deploy reusable solutions, along side commands for the creation and management of other “application like” deployments.

Overall, the ei CLI tool can be used as a replacement to kubectl in many/most situations, and likewise for docker, or as a complement. For example, kubectl can be used for YAML manifest based devops or for operations and extension currently lacking support in the CLI. Currently, the ei CLI tool supports the building of Docker images only inside a cluster (in integration with Tekton pipelines). So docker, or similar tool, can continue to be used to build images locally if this is your preferred workflow. Where we believe the ei CLI tool excels is in providing an integrated PaaS model to devops and microservices development, with most of the common workflows simplified, including: incremental and easy configuration of deployments, setup of DNS routes and DNS domain management, troubleshooting of deployments, working with multiple image registries and GIT VCS providers, solution catalog management, and CI/CD pipelines with optional webhooks.

Getting Started

Installation

To install the tool you need to download the .tgz file below and extract its content. After this, it runs as command ei.

wget https://cdn.einnovator.org/cli/ei-latest.tgz

tar -xf ei-latest.tgz

cd einnovator-cli

chmod +x ei #linux/mac only

./ei

For convenience, you may want to add the folder where you expanded the tool to the PATH.

Note: The tool is implemented in Java. So you need to have the JVM command (java) installed and in the PATH.

A welcome/help message is displayed when you type ei without commands. You can also use option -h to get help with any command.

ei

Welcome to the EInnovator CLI (Command-Line Tool).

Your super-duper CLI tool for Multi-Cluster/Multi-Cloud Kubernetes devops and micro-services development.

Type a name to get help on commands for that service:

sso SSO operations

devops Cloud Manager Devops operations

notifications Notifications Hub operations

documents Document Store operations

social Social Hub operations

payments Payment Gateway operations

Generic commands:

ls List Spaces (and other resources)

pwd Show current default/parent resources

cd Change current Space (and Cluster)

version Show CLI version

set Set environment variable

echo Echo expression list

exit Exit interactive mode

help Show this help

login Login to Server

api API operations

token OAuth Token operations

run Create and run Deployment (or Job or CronJob)

kill Stop or delete Deployment (or Job or CronJob)

market List Marketplace Solutions

install Install Solution (standalone or from Catalog)

Usage: ei sso | devops | notifications | documents | social | payments |

ls | pwd | cd | version | set | echo | exit | help args... [-option value]* [--options=value]*

Starting the Services

To use the CLI tool for devops you need to have an instance of Cloud Manager service running. If you want to use Cloud Manager in multi-user mode, you need also to start the SSO Gateway service. I have covered in other articles details on how to do that, so I direct to one of these. You can also find the links to the documentation in the references at the bottom. Cloud Manager is packaged as a Docker image, and several installation options are possible, including: with kubectl, docker, helm, and helper scripts for multi-user mode (based on YTT).

Login

First step in using the CLI is to login to your installation of Cloud Manager with command login (or l alias). Options -u and -p specify the user credentials, and the trailing parameter (or option -a), specified the URL for Cloud Manager.

# login to local cloud manager API with username/password

ei login -u username -p password http://localhost:5050

You might prefer to provider your username/password credentials interactive (for security reasons):

# login to remote cloud manager API interactively

ei login https://devops.acme.com

Username: ....

Password: ....

API: http://localhost:5050

The CLI tool detects automatically if Cloud Manager is running in single-user or multi-user/team/enterprise mode with a backing SSO Gateway. The authentication protocol is adapted accordingly (OAuth2 vs. encrypted Basic). With OAuth2 the same token is used across all the services.

By design, you can use the same installation of Cloud Manager to run operations in multiple clusters and multiple clouds. You can also use the CLI tool to login to different installations of Cloud Manager each with its own API endpoints, and switch between them with command api set. The CLI tool keeps track of tokens/credentials across different APIs (saved in a local file ~/.ei/config.json). So you don’t need to login again when you switch API. If a token expires (after several days), you need to login again. In single-user mode the credentials never expired.

Importing Clusters

Kubernetes devops is all about deploying workloads — applications and services – to a cluster. So you need to have one or more running clusters to make use of Cloud Manager UI or CLI. If you have Docker Dasboard installed, you can use the local pseudo Kubernetes cluster to get started. Otherwise, you can create a cluster on one of the many cloud providers (e.g. DigitalOcean, Linode, Scaleway, AWS, GCP, Azure, IBM Cloud, Oracle, etc.) If you have a on-premises cluster, you can also use that one.

You can import a cluster access details into CloudManager in several ways. From the UI, you can upload a kubeconfig file, import directly from a cloud provider by entering a provider specific token, or manually. With the CLI, the easiest way is to have a kubeconfig file and use command cluster import with option -f to specify the location of the file.

# import cluster from kubectl config file

ei cluster import -f .kube/config

You can check the list of clusters defined with command cluster ls (or ls -c).

# list clusters

ei cluster ls

# list clusters (short-cut)

ei ls -c

ID NAME DISPLAYNAME PROVIDER REGION

3 eu-central DO EU Central DigitalOcean eu-central

4 eu-central-de EU Central - DE EInnovator eu-central-de

13 us-central Linode us-central Linode us-central

15 demo Azure Demo US-East Azure us-east

18 mac MacLocal OnPremise <none>

26 demo demo AWS us-east

32 cluster-1 cluster-1 Google us-central

33 mycluster-free/bu7ahh5d0h3dhiskgmh0 mycluster-free IBM us-central

34 lke7914 lke7914 Linode us-central

You can also filter clusters by region, provider, or name.

# list clusters with filter

ei ls -c --region=us-central

ei ls -c --provider=aws

ei ls -q=us -c

ei ls demo -c

In all listing and get operation options -o can be used to select output format, and options -O the field set. Option -b is short for (-o=block).

# list clusters with custom columns

ei ls -O=name,provider,region -c

Working with Spaces

Workloads, and resources in general, exist inside virtual namespaces, which are called Spaces in CloudManager. To create a space use command space create cluster/name. The cluster/ prefix qualifies the cluster where you want the space to be created.

# create space dev in cluster demo

ei space create demo/dev

If you already have a namespace pre-created when you import the cluster, you can use command space attach to make it managed by Cloud Manager.

# attach pre-existing namespace in cluster us-central

ei space attach us-central/dev

The command cd is used to define the current or default space. Operations on workloads imply this space, unless option -n is used to override this.

# make us-central/dev the current space

ei cd us-central/dev

Setting a space as a working default, implies also setting its cluster as default. So if you do further cd space without a cluster/ prefix qualifier, implies changing to a space in current cluster. To check the current defaults use command pwd.

# list current parent resources (api, cluster, space, etc.)

ei pwd

API: http://localhost:5005

Cluster: us-central

Space: us-central/test

To list all available spaces across all clusters use command ls (short for space ls).

# list spaces

ei ls

ID NAME DISPLAYNAME CLUSTER PROVIDER REGION

5 prod EInnovator eu-central DigitalOcean eu-central

36 qa test-uscentral us-central Linode us-central

60 dev dev us-central Linode us-central

You can also filter the listing of spaces by cluster, provider, region, or name.

# list clusters with filter

ei ls --cluster=local

ei ls --region=us-central

ei ls --provider=aws

ei ls -q=dev

ei ls dev

You can easily navigate between the CLI tool and the web UI with the view commands. It opens a web-browser window/tab in the dashboard of the specified resource.

#open dashboard UI for current space

ei space view

#open dashboard UI for named space

ei space view us-central/prod

#open dashboard UI for named cluster

ei cluster view us-central

#open dashboard UI for current cluster

ei cluster view

Deploying Applications

Command run is used to create, config, and start a Deployment in a single step. The syntax and some options are similar to kubectl and docker, but with extra options, syntax and functionality. Option --image, or argument after name, specifies the docker image to use. Option -k specifies the number of instances (pods/replicas) to create. If a deployment is stateful use option -s. Option -o gets/shows the deployment after it is created. To track progress use option --track.

# run (create and start) a deployment with specified image

ei run superheros einnovator/einnovator-sample-superheros -o

# run stateful deployment with specified image and pod/instance count and track progress

ei run superheros --image=einnovator/einnovator-sample-superheros -k=3 --track -s

Command ps lists deployments in current space (or another one if option -n is specified). To list all workloads including Jobs and CronJobs, use ps -a. To list only Jobs use option -j, and only CronJobs option -c.

# list deployments in current space

ei ps

ID NAME DISPLAYNAME KIND STATUS AVAILABLE DESIRED READY AGE

451 superheros Superheros Deployment Running 1 2 1/3 106d

469 cm cm Deployment Running 1 1 1/1 31d

486 nginx Nginx StatefulSet Running 1 1 1/1 16d

504 heros2 heros2 Deployment Running 3 3 3/3 6d

506 einnovator-sso SSO Gateway Deployment Running 1 1 1/1 1d

510 home home Deployment Created <none> 1 0/0 1d

# list deployments in named space

ei ps -nqa

ei ps -neu-central/prod

# list all workloads in named space

ei ps -n eu-central/dev -a

# list jobs and cronjobs in named space in current cluster

ei ps -n dev -j -c

A variety of properties of a deployment can be configured inline at run/creation time. Option --port (or --ports) is used to defined the exported ports. Option --stack is used to hint on the stack the application is implemented in, which triggers some environment variables to be automatically set. For example, setting --stack=boot indicates a Spring Boot application, and makes environment variable SERVER_PORT to be automatically set with the value of the (first) configured port.

# run (create and start) a deployment with specified image, exported port, and auto-configure stack-aware environment variables

ei run superheros einnovator/einnovator-sample-superheros --port=80 --stack=boot

Environment variables can also be configured inline at creation with option --env= (or later with command env add). Multiple variable settings can be specified comma separated. The simplest form of environment variable setting is with a plain key=value pair. Environment variables whose value reference entries in a ConfigMap are specified with syntax ^configmap.key. Environment variables whose value reference entries in a Secret are specified with syntax ^^secret.key.

# Run a deployment with set environment variables

ei run heros --image=einnovator/einnovator-sample-superheros -port=80 --stack=boot \

--env=SPRING_PROFILES_ACTIVE=mongodb,THEME=^apps-config.theme,SPRING_DATASOURCE_PASSWORD=^^apps-secret.db-password

Likewise, volume (claim) mounts can be specified inline at run/creation time with option --mount= (or --mounts=). The disk storage of a deployment is by default ephemeral — i.e. is discarded on shutdown or restart. Thus, to make persistent storage permanent, volume mounts should be used. The syntax name:/mountPath=size is used for volume mounts. Multiple mounts can be specified comma separated. ConfigMaps can also be mounted with syntax: name:/mountPath=^configmap.key or configmap.key1+key2, for multiple keys. And Secrets can be mounted with syntax name:/mountPath=^^secret.key.

# Run a deployment with a mounted volume

ei run heros einnovator/einnovator-sample-superheros \

--mount=data:/data=1Gi

# Run a deployment with a mounted volume, configmap, and secret

ei run heros einnovator/einnovator-sample-superheros \

--mount=data:/data=1Gi,config:/config=^apps-config.theme+username,config2:/config=^^apps-secret.password```

As a side note, in micro-service architectures you might also opt to have the persistent storage being taken care by a dedicate service. And have most apps connect to this service.

Incremental Configuration

A deployment can also be created and configured in multiple steps with command deploy create. Without the option --start, the deployment is created only in Cloud Manager DB and is not started in the underlying cluster. This allow further configuration to be done incrementally before the deployment is started. Command env add (short for deploy env add) is used to add environment variables. Command mount add (short for deploy mount add) is used to add mounts. Command deploy start starts a deployment.

# create, configure, and start deployment

ei deploy create superheros einnovator/einnovator-samples-superheros

ei env add superheros SPRING_PROFILE_ACTIVE testdata

ei env add superheros UI_THEME fantasy

ei mount add superheros data --mountPath=/data --size=3Gi

ei mount add superheros config --mountPath=/config --configmap=superheros-config --items=theme

ei mount add superheros config2 --mountPath=/config --secret=superheros-secret --items=db-password

ei deploy start

Environment variables and mounts can be managed with a variety of commands. Commands env ls and mount ls lists the environment variables and mounts for a deployment, respectively. Command env update and mount update updates environment variables and mounts. Command env delete and mount delete (or aliases env rm and mount rm) removes an environment variables and mounts.

Managing and Trouble-shooting Applications

A deployment can also be updated after creation in one or multiple steps with command deploy update. Changes are local to Cloud Manager and don’t affect the deployment if it already running in the cluster, until it is restarted with command restart.

# reconfigure deployment and restart

ei deploy update superheros --image=einnovator/einnovator-samples-superheros:1.1

ei env add superheros UI_THEME newtheme

ei mount update uploads --size=2Gi

ei deploy restart superheros

Command deploy stop (or kill) can be used to stop a deployment. This will remove the deployment from the cluster, while keeping all the configuration details in Cloud Manager DB. Common use-cases for this, include: save resources when not needed, configure the deployment further and restart it at a later time, use the deployment to define a reusable standalone solution, and copy the deployment to other spaces. Command deploy delete (or kill -f) deletes the deployment completely — in the cluster and in Cloud Manager DB.

# stop deployment (release resources on cluster, but not deleted in CloudManager)

ei deploy stop superheros

# stop deployment (alias)

ei kill superheros

# delete deployment

ei deploy delete superheros

# delete deployment (alias)

ei kill superheros -f

To troubleshoot a deployment you can check the logs of a pod with command deployment logs (or alias deploy logs). By default, it show the logs of first pod. You can specify another pod with option --pod. To show only the n-th last lines of the log, use option -l.

# show log tail of deployment (first) pod

ei deploy logs superheros -l200

Additionally, you can check the events of deployment with command deploy events. With option -c it shows “cluster-level” events, rather than “high-level” user triggered events.

# show events for a deployment

ei deploy events ls superheros --pageSize=30

Scaling Applications

Deployments can be scaled in the number of pods/instances/replicas with command deploy scale (short for deploy update -k). Option --track is useful to keep track of progress (e.g. if instance count is large, or to make sure the cluster has enough memory/nodes provisioned and the space quota).

# scale deployment to 10 replicas (horizontal scaling)

ei deploy scale superheros 10 --track

Similarly, a deployment can be scaled in the resources (memory, ephemeral disk storage, and CPU share) allocated to each pod/instance/replica with command deploy resources.

# update resources (vertical scaling)

ei deploy resources superheros --mem=2Gi --disk=3Gi

Command pod ls can be used to list actual Pods running for a deployment or for any deployment in the space. Likewise, command replicaset ls (or rs ls) for ReplicaSets.

# list replicas/pods/instances for deployment

ei pod ls superheros

# list all pods in current space

ei pod ls

# list all replicasets for deployment (history size is configurable)

ei rs ls superheros

# list all replica sets in current space

ei rs ls

NAME STATUS RESTARTS AGE

superheros-84767b8d94-l978l Running 12d

DNS Route Management

Deployments can be setup with DNS routes hostname.domain to be easily accessible by end-users from a web-browser. When you want to setup secure TLS/HTTPS access, a domain should be configured with a (wildcard) certificate. In the CLI, command domain create is used to create a domain. If you have certificate files already in the file-system, you can use options --crt, --key, and --ca, to specify the files for the certificate, private-key, and certification authority bundle. If you don’t have a certificate you can use the Cloud Manager UI and get a free one when you create the domain via the UI. For development, you may also use unsecure access and skip the certificate.

# create an (unsecure) domain

ei domain create acme.com

# create a secure domain

ei domain create acme.com --crt=crt.pem --key=key.pem --ca=ca.pem

For convenience when creating routes, you can set a domain as default with command domain set. Otherwise, you need to specify the domain option -d when creating a route. Command domain ls (or ls -d) lists the created domains.

# List domains and set current/default domain

ei domain ls

ei domain set acme.com

DNS routes can be added to a deployment at start time or at any time later. With command run, deploy create, and deploy update, a route can be added with option -r. If no name is specified for the route, the deployment name is used. Option -d specifies the domain to use (designated by DNS name, id, or uuid). Multiple routes can be specified comma separated.

# Run a deployment with a route set automatically

ei run superheros --image=einnovator/einnovator-sample-superheros --port=80 --stack=boot -r

# Run a deployment with a named route set automatically

ei run superheros --image=einnovator/einnovator-sample-superheros --port=80 --stack=boot -r heros

Routes are implemented using Kubernetes Ingresses. By default, each route defines it own ingress. To make routes share a common ingress use option --shared (actually, one for TLS secured and another one for non TLS secured). Routes can optionally override the certificate of a domain using the same options as for the domain --crt, --key, and --ca. Cloud Manager considers the ingress controller that is defined in the cluster settings when creating the ingresses, and fallbacks to Nginx ingresses controller as default.

Command deploy go provides an easy way to navigate to an app. By default, the app is open in the primary route. This is the first one added, or the last one added with option --primary.

# open deployment application in browser (in primary route)

ei deploy go superheros

Routes can also be added one at the time with command route add (short for deploy route add). When a route is added to a deployment is immediately setup (possibly with a few seconds delay). A route can also have an empty hostname. In this case, the full DNS of the route is the same as the DNS of the domain.

# add additional route to deployment and make it primary

ei route add superheros heros -d demo.nativex.cloud --primary

# add route with empty hostname

ei route add home -d acme.com

Command route ls lists the routes of a deployment. Command route update updates the route. And command route rm delete the route.

# list DNS routes of deployment

ei route ls superheros

ID HOST DNS DOMAIN TLS

1 superheros superheros.samples.nativex.cloud samples.nativex.cloud false

2 superheros superheros.test.nativex.cloud test.nativex.cloud true

5 superheros superheros.samples.einnovator.org samples.einnovator.org true

6 superheros superheros.einnovator.org einnovator.org true

Marketplace Solutions

Pre-bundled solutions are useful to install third-party software. Command market (short for marketplace) list all available solutions. Both standalone solutions and catalog solutions are listed. With a parameter market [q] only solutions with name matching the specified query parameter are listed. By default, Cloud Manager setups automatically a few catalogs with a variety of solutions (community contributions, from EInnovator, and third-party). Other catalog can be added via the UI or CLI.

# list available solutions

ei market

# list solutions mathing query

ei market nginx

ei market sso

ei market mariadb

Command install is used to install a solution from the marketplace. A mandatory parameter specifies the name. Qualified names of the form catalog/solution match solutions only on the specified catalog. Names of form /solution match only standalone solutions. Unqualified names match both catalog and standalone solution. If multiple matches are found, qualified names need to be used.

#install nginx (from any catalog or standalone solution)

ei install nginx

# install solutions from specified catalogs

ei install community-charts/mariadb

ei install einnovator-solutions/einnovator-sso

ei install 3/mysql

# install custom standalone solution

ei install /nginx-custom

ei install acme-backend

CICD Pipelines

Cloud Manager allows CI/CD (continuous-integration/delivery) pipelines to be run inside a cluster, in integration with Tekton. This allows docker images to build and application tests to be run on demand or automatically (at git commit time, with webhooks). The first step to setup this, is to install the Tekton runtime in the cluster, and have the appropriate pipeline and task definitions in a space. This can be done via the UI (see this article for guidelines), or if using the CLI as regular marketplace solutions (see CLI reference document). For example, to build a Java application with Maven build-tool, the pipeline jib-maven-pipeline and the task dependencies git-clone and jib-maven-task need to be installed.

To configure a deployment for image building the general purpose command deploy update can be used. You need to configure the Git repository where source code is pulled (option --repo), the image name:tag to be build (option --buildImage), define and select a registry with appropriate credentials to push the image (option --registry or --reg), and finally the builder pipeline to use (option --builder). If you are pulling from a private Git repo, you also need to use option --vcs (or --git) to select the Git VCS where the credentials are configured.

To list all the registries and Git Vcs you have configured, use commands registry ls (or alias reg ls) and vcs ls (or aliasgit ls). You can define new ones via the UI, or via the CLI using commandregistry createandvcs create`.

# update deployment CI/CD details

ei deploy update superheros --builder=jib-maven-pipeline --repo=https://github.com/acme/acme-superheros \

--buildImage=acme/superheros:1.1.1 --registry=dockerhub-dev99 --vcs==github-dev99

A useful way to display CI/CD related details with commands deploy get or deploy list is with option -O=cicd (field/column set cicd) in block format (option -b).

# show CI/CD related details for deployment

ei deploy get superheros -O=cicd -b

ID 441

NAME superheros

BUILDER jib-maven-pipeline

BUILDERKIND Pipeline

REPO https://github.com/einnovator/einnovator-sample-superheros

VCS github-dev99

BUILDIMAGE einnovator/einnovator-sample-superheros:1.1.1

REGISTRY dockerhub-dev99

WORKSPACE VolumeTemplate

WEBHOOK <none>

To start a build manually use command deploy build. If option -o is specified the details of the build are displayed after build is started. Option --track tracks progress of the build. If option -d is specified, the deployment is restarted automatically with the new image once the build is completed.

# start build for deployment

ei deploy build superheros -o

NAME STATUS MESSAGE STEP AGE DURATION

superheros-jib-maven-pipeline-5ffb6c9d Running Progress: 0 done <none> now <none>

To list ongoing or previous builds for a deployment use command build ls [deployId]. Without the optional parameter [deployId] the command lists all builds executed in a space (for deployment, jobs, and cronjobs). You can filter the builds listed by status (with option --status) and by name (with option -q). To remove a previous build, or kill one running, use command build rm (or aliases build delete or build kill).

# list builds for deployment

ei build ls superheros

NAME STATUS MESSAGE STEP AGE DURATION

superheros-jib-maven-pipeline-5ffb0324 Succeeded Progress: 2 done <none> 7h 2m39s

superheros-jib-maven-pipeline-5ffb6c04 Succeeded Progress: 2 done <none> 4m 2m14s

superheros-jib-maven-pipeline-5ffb6c9d Running Progress: 1 done <none> 1m <none>

Other Services

The CLI tool for Cloud Manager is built to support multiple services and be extensible. Devops enabled by Cloud Manager is just one of the many uses for it, although possibly one of the most important ones. The overall goal of the tool, is to support multi-cluster/multi-cloud Kubernetes devops and micro-service development. Other services part of EInnovator micro-service suite have built-in integration and command support as well, including: SSO Gateway, Notifications Gateway, Document Store, Social Hub, and Payment Gateway. Since Cloud Manager can be setup to integrate with some of these services — e.g. the SSO Gateway for user IAM, and Social Hub to support space level discussion/chat channels – the CLI becomes handy to manage these other services as well when doing devops in multi-user/team/enterprise mode. For example, the command user ls list the users registered in the SSO Gateway. Commands channel ls and message ls lists channels and messages.

Extending the CLI

The CLI tool, and all the libraries that is uses, are available as open-source software in a Github public repositories. In the public GitHub repository for the CLI (see references), we describe how the tool can be extended to support command for other services (by implementing a CommandRunner). If you plan to develop a micro-services architecture with several services, that possibly integrate with some services of the EInnovator micro-service suite such as the SSO Gateway, you may want to extend the tool to have commands to do CRUD and other operations in your own services and entities.

The tool is implemented in Java, and the client libraries as Spring Boot starters. A future (re)implementation of the tool in Go Lang is also being considered, when the Go Lang client libraries for the different services become available and mature.

What’s Next ?

We covered a good amount of topics, commands and options and we should wrap up. Best way for you to digest all the above, is to put it practice and start using the CLI and Cloud Manager. The Common Receipts section in the reference manual has many more examples and covers additional topics, including: running Jobs and scheduling CronJobs, write and run script files (option -E), connectors and service binding, and more. You may also want to give a try running the CLI tool in interactive mode (option -i), which supports auto-completion and helps in getting familiar with the commands.

References / Learning More

- Cloud Manager CLI Tool Reference

- @Medium: Cloud Manager, Kubernetes Better Dashboard

- @Medium: Building Java Spring Apps in the Cloud with Kubernetes Cloud Manager and Tekton Pipelines

- @TheNewStack: Cloud Manager, A New Multicloud PaaS Platform Built on Kubernetes

- Deploying Cloud Manager On-Premises

- Cloud Manager Reference Manual

- EInnovator CLI Tool GitHub public repository

Comments and Discussion